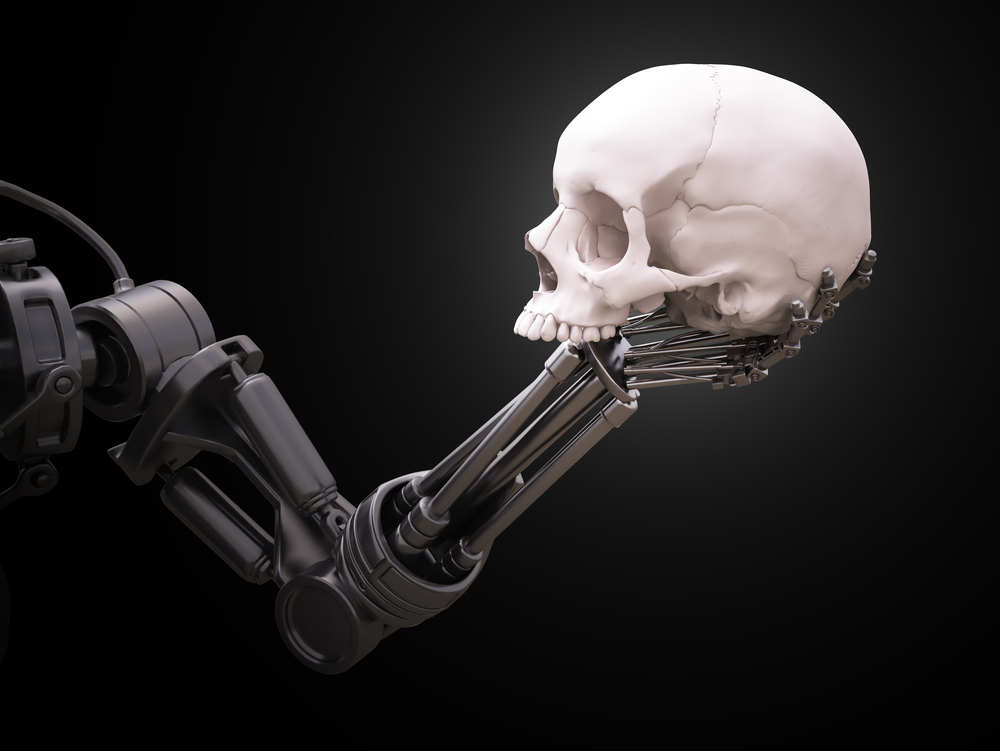

A trio of UK-based researchers from the Alan Turing Institute recently published a paper in which they defend that existing artificial intelligence regulations aren’t good enough. They even recommended bots should be held accountable to the same standards as the engineers who create them.

Holding AI accountable

According to the researchers, there are a number of issues that can in the future lead to a variety of problems. The lack of transparency about how AI works, and the diverse nature of systems being developed are given as examples. The paper reads:

Systems can make unfair and discriminatory decisions, replicate or develop biases, and behave in inscrutable and unexpected ways in highly sensitive environments that put human interests and safety at risk

A cited example was that of self-driving cars. In very specific situations, the AI may have to decide between hitting a pedestrian, or swerving and injuring the driver. Both scenarios could lead to death.

Moreover, since AI is already programming itself, we may soon be beyond the point of knowing what the reason behind their decisions is. Leaving the AI unaccountable or impossible to decipher may lead to chaos – as we won’t know why the AI decides to act in a certain way.

As such, researchers suggested the creation of guidelines to cover AI and decision-making algorithms, even though they also say these areas are hard to regulate. Extra transparency, for example, might negatively affect AI performance – and companies are certainly not too keen on revealing their intellectual property to the public.

Researchers stated:

The inscrutability and the diversity of AI complicate the legal codification of rights, which, if too broad or narrow, can inadvertently hamper innovation or provide little meaningful protection,

Nevertheless, researchers pointed out that various countries and organizations are addressing the problem. The European Union, for example is increasingly in favor of legislative solutions. To stop AI from getting out of hand, Google has already created an AI kill switch and is developing AIs while discouraging them from disabling their own kill switches.

AI went awry in the past

The team has in the past noted that we’re already too dependent on algorithms, and as such an AI watchdog should be set up to make sure nothing goes wrong when these protocols make important decisions about our lives.

As reported by The Guardian, AI has in the past been led to do some not so great things. In Washington DC a school used an algorithm to assess teacher performance, and due to an apparent cheat some of the best teachers got fired.

Another example is that of Microsoft’s Tay chatbot. It was created to talk to teens via social media and learn from conversations, but got trolled and ended up going off the rails, tweeting out that the Holocaust was made up, and that she supports genocide, for example.

If you liked this article, follow us on Twitter @themerklenews and make sure to subscribe to our newsletter to receive the latest bitcoin, cryptocurrency, and technology news